We’ve been doing our homework, and two things seem to be true about cybersecurity awareness training simultaneously:

- It can be very effective at protecting businesses from one of the most common security threats they face (the majority, according to the Ponemon Institute). Namely, phishing.

- MSPs, often the single most reliable source of cybersecurity for small business, want to offer training as a part of their services but unwillingness on the part of their clients prevents them from doing so.

If you know, as we do, that one in three American workers admits to clicking on a phishing link in the past year, what’s the reason for such reluctance? Here are four we commonly encounter and how to overcome them.

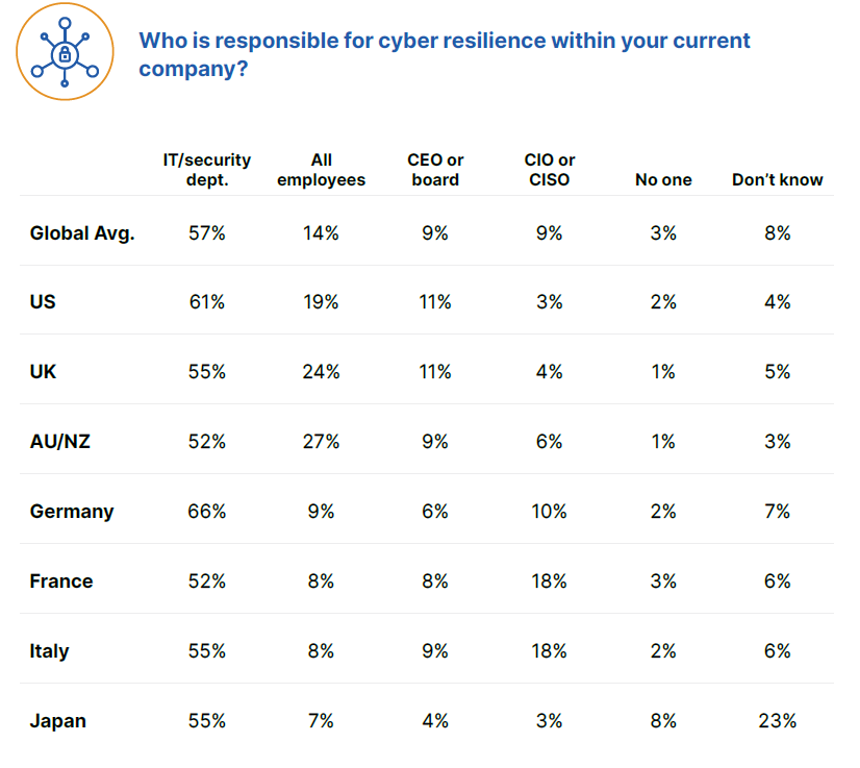

The “higher-ups” don’t see the value of training

For (the lucky) companies who’ve yet to be hit by a significant cyberattack, security awareness training may not hold obvious value. After all, very few organizations have zero cybersecurity measures in place. “What’s my endpoint security for, anyway?” “Threats are stopped by my firewall.” So the thinking goes…

Even if they see the need for user training from cybersecurity standpoint, some small businesses aren’t sure it’s worth the effort. IT budgets are often strained as it is, and couldn’t those dollars be better spent on the latest high-tech trend in the cyber defense industry?

Well, the numbers don’t lie, as they say. And in survey after survey, anecdote after anecdote, the numbers tell the same story: training works. In our latest survey of more than 4,000 managed service providers, for instance, 59 percent reported more suspicious emails being reported to IT. Thirty-seven percent reported fewer security incidents in general. Our own internal data tells us that our customers who use security training see up to 90 percent less malware than those that use an antivirus alone.

Leadership expects a “set it and forget it” or “one size fits all” experience

Executives will also often back off security awareness training when they realize it’s not a one-time test or a certificate they hang on a wall in their office. It’s true that the most effective cybersecurity training programs are tailored to a specific business and delivered on an ongoing basis.

Ensuring that training is tailored to a business’s operations is one of the best ways to overcome our next objection—that training doesn’t accurately represent the threats facing employees. That means providing industry-relevant compliance training and providing riskier users more training than tech savvy ones. This doesn’t happen by itself.

Persistence is also key when it comes to user security training. Our data indicates that the average click-through rate for a phishing simulation campaign is 11 percent. That drops to eight percent in the second campaign, but by the eleventh it’s down to five percent. Commit to 20 campaigns and you can reduce that rate to two percent.

Training doesn’t mirror real-world threats

Cybersecurity “tests,” especially of tactics like phishing, are of dubious effectiveness. When an employee knows a test is being administered, his or her guard goes up in unnatural ways. Results are skewed by the subject merely knowing a test is underway. Additionally, as any former student knows, studying up on cybersecurity principles is no guarantee of long-term retention.

For training to be effective it needs to be topical and believable. A healthcare provider needs to be familiar with HIPPA compliance protocol, for instance, and be able to identify an email spoofing a large insurance provider.

Real-world training should also mirror real-world events. The COVID-19 pandemic prompted a rise in scams related to the virus, so users should be cautious of any communications that look like they could have been ripped from the day’s headlines. Training that can’t be tailored to this degree won’t be as effective.

Employees aren’t onboard

Several factors can negatively affect employees’ willingness to adopt training. Some may believe they know all there is to know about cybersecurity. Some may believe it’s hopelessly over their head. For some, it’s simply not in their job description and that’s enough to stop them from pursuing training.

Whatever the reason for reluctance, buy-in starts at the top. Executives and other leaders should make it clear to employees that they subject themselves to the same training as their employees. (And if the C level doesn’t believe it’s an attractive target, encourage them to read up on spear phishing or “whaling.)

Some training is also just poorly designed. Courses don’t have to be drawn-out, black-and-white, bubble-filling multiple-choice tests. Sometimes simple awareness-raising of current security threats is enough. There’s evidence to suggest that micro learning modules are more effective. Courses can be aesthetically pleasing and feature good UX. It’s key to getting employees to engage, in fact.

The right approach requires the right platform

Whatever the reason a client or employee has for being reluctant to adopt security awareness training, there’s a good chance it can be overcome with the right tool. Visit the Webroot® Security Awareness Training page to learn more and to see why the research firm Info-Tech had this to say about Webroot:

“Our SoftwareReviews data shows that Webroot and their customers have a very positive relationship, with 91% of sentiments being positive.”